SUMMARY

- In late April, a “disinformation” summit was held at the University of Cambridge in the UK, attracting well-known figures from the international censorship industry.

- One UK politician boasted of his regional legislature’s plans to weaponize the judicial system to ban political candidates from running for office if they violate online censorship laws.

- Another panelist showcased a Chinese social media platform’s censorship techniques, describing the platform not as censored, but rather subject to a “very restrictive regulatory regime.”

- In her comments, Jankowicz described DHS’s sprawling censorship capacities, which existed in every sub-agency of the “Frankenstein” department.

- Future censorship techniques were discussed, including “psychological inoculation” — pre-biasing people against disfavored narratives before they encounter them in the wild. Panelists were torn on AI, with some emphasizing its potential to censor at scale, and others emphasizing its capability to amplify disfavored information.

The US Federal government’s rapid U-turn from pro-censorship to anti-censorship under the new administration has left the vast network of “disinformation” researchers scrambling for a path forward. A recent summit at the University of Cambridge in the United Kingdom brought together some well-known figures from the global government-funded censorship industry, giving us a glimpse into their future strategy.

Attendees at the meeting included the CEO of Bellingcat, an investigative outfit founded in the UK that got its start with funding from the National Endowment for Democracy. It also included the former head of the short-lived DHS disinformation governance board, Nina Jankowicz. Former National Security Council official and star Trump impeachment witness Lt. Col. Alexander Vindman was also in attendance. Other speakers and attendees included pro-censorship officials from the UK and Brazil, and a wide range of figures from the NGO and disinformation research complex.

The University of Cambridge is home to the Social Making Decision Lab (CSMDLab), a leading proponent of “psychological vaccines” — techniques to shape public opinion from the top-down by “inoculating” them against disfavored narratives by “preemptively injecting people with a severely weakened dose of fake news” (in the words of CSDMLab head Sander van der Linden). CSDMLab is funded by the U.S. taxpayer via DHS’s Cybersecurity and Infrastructure Security Agency, the same sub-agency that led the way in censoring the 2020 election.

A revealing set of remarks at the summit from Alice Marwick, a professor at the University of North Carolina, summed up the general tone: “disinformation” research is on the back foot, because large sections of the public now perceive it as censorship pressure.

By reframing disinformation research as censorship, you’re able to sort of paint everybody in a field, regardless of their race, gender, ethnicity, sexuality with this very broad brush and make them all seem like people who are, sort of, out to suppress ideas. And that’s a real problem…They’ve been very effective at communicating this narrative to the public at large. It came from this set of very coherent narratives that are being pushed everywhere from these sort of very low-level fringe groups, all the way up to the highest levels of the federal government.

We cannot cede ground… When you let another party frame the debate, you lose the debate before it’s even started. We have to actively advocate for the work that we do.

Marwick’s call to straightforwardly defend disinformation research seems to be falling on deaf ears. In recent months, there has been a move away from counter-disinformation as a pretext for online content regulation. The UK Online Safety Act, for example, which specifically regulates disinformation and hate speech, was justified by UK government ministers in media appearances entirely in terms of child safety rather than countering disinformation.

“Media Literacy” campaigners, too, are shifting the focus away from disinformation to child safety in a bid to justify their initiatives – a turnaround from 2022, when the U.S. government push for media literacy lessons in schools was framed predominantly around counter-disinformation work.

Judicial Supremacy

A common theme throughout the summit was the failure of previous interventions against so-called disinformation. One remedy to this issue was proposed by Adam Price, a UK politician and former leader of Plaid Cymru, an influential regional party in Wales, who argued that laws preventing the spread of “false rumors” are necessary to contain fascism.

Price then hailed his regional legislature for its plans to simply ban politicians from running for office if an “independent” judge found them guilty of disinformation.

And I’m very proud of the fact that my parliament and the government in Wales, the Welsh government, has said that we are going to be the first parliament, the first jurisdiction in the world, to make deliberate deception by politicians an offense which will lead to that disqualification from politics through an independent judicial process, not decided by other politicians. Damien’s right on this, you can’t have politicians deciding whether a statement by another politician is true. But we have an independent judicial process.

This “independent judicial process” has played out in other countries — the 400 indictments against Donald Trump, the ban of Jair Bolsonaro from running for re-election in Brazil, and, in France, the five-year ban on holding public office against National Rally leader Marine Le Pen.

In Romania, the first-round victory of a populist presidential candidate was annulled by judges on the grounds that he had been supported by “online disinformation” from Russia on TikTok and Telegram (the U.S. government-funded Atlantic Council praised the nullification as an example of “democratic resilience.”) A new election was held, during which French officials reportedly urged Telegram founder Pavel Durov to censor supporters of the populist candidate.

The common theme in all of these cases is the allegedly “independent” judiciary has exclusively targeting populist right-wing candidates, to the applause of the censorship industry and its supporters. Price’s plan represents an end game of sorts: banning not just presidential candidates, but even regional political candidates if they are found to be spreading disfavored information and disfavored narratives.

Nor are ordinary citizens immune. The Economist recently reported that in the UK, 30 people a day are arrested for online posts. And in Germany, the Network Enforcement Act (NetzDG) of 2017 has led to thousands of online speech crime cases a year, with glowing coverage from U.S. media.

The Cambridge Disinformation Summit’s YouTube page also features a clip from Cambridge professor Alan Jagolinzer’s address to EU parliamentarians, telling European lawmakers that tech regulation on matters like disinformation and hate speech should go beyond financial fines against companies, and should instead target individual employees within companies.

We impose fines – fines on billionaires and fines on big tech companies and big multinational corporations will do almost nothing for behavioral change. And so we have to move away from fining around enforcement. That is not an efficacious tool, it is absolutely not. As well as the fact that it doesn’t name the human beings within the institutions who have committed the crimes that generated the fines in the first place. If you want true enforcement, you have to be willing to name human beings, and move beyond fining them.

Pointers from China

Another recurring theme in the disinformation summit was the seemingly relaxed attitude of attendees towards China, which maintains the strictest online censorship policy in the world. One speaker at the summit, Alex DiBranco, favorably contrasted Chinese-owned TikTok with American social media platforms like Facebook and X, arguing that TikTok took the mission of countering disinformation and hate speech more seriously than its American rivals.

Why? Because TikTok hired a former member of the Southern Poverty Law Center (SPLC), an American organization famed around the world for its pro-censorship bias and partisan slant.

And I think what I would say about technology companies is some platforms are better than others in terms of whether they care or not. Facebook traditionally does not care at all. TikTok actually has had hired somebody from the Southern Poverty Law Center to work on hate speech and [is] trying to understand it. And so I don’t know about on kind of a legal side incentivization, but on the kind of social side and what we invest in, there are technology platforms that are run by people who actually care and those who don’t.

And I think that we can see with Musk’s takeover of Twitter that he has now called X and people moving off that platform and onto BlueSky that people actually have the power to make or break a platform.

The claim that BlueSky represents the “breaking” of X is simply erroneous: BlueSky had grown to 35 million users as of May 2025, but this is scarcely 5 percent of X’s monthly active user count, which is estimated at 650 million. Moreover, BlueSky’s monthly usage has shown signs of decline, dropping below its November high of ~150 million in April.

Another speaker on China was Vicki Wei Tang of Georgetown University, who analyzed content moderation on an unnamed Chinese social network.

Wei Tang noted the vast scale of censorship on the platform, with 19 percent of all posts on the platform(over 37 million in total) deleted by content moderation systems in a six-month period. Of these, 13,051,062 were deleted because of “the government’s guidelines on cyber information.”

While Wei Tang did not praise the social network’s moderation policies, at no point in her lecture did she use the words “censor” or “censorship” — despite over 13 million posts being deleted due to “government guidelines.” Instead, Wei Tang simply referred to China’s approach as a “very restrictive regulatory regime.”

Weaponizing Computer Science Departments

Although they’ve been pushed out of power in D.C. and, to an extent, Silicon Valley, the censorship industry still enjoys overwhelming overwhelming sympathy in the western world’s most ideologically slanted institution: the university. In both politics and corporations, there is now considerable mistrust towards the industry of “disinformation experts,” which is now correctly seen as a partisan censorship project. Not so in universities, which still host “disinformation summits” as if nothing has changed.

Universities are certainly a step down in power compared to the U.S. government and Big Tech companies, where pro-censorship views reigned supreme until very recently. Nevertheless, Bellingcat CEO Eliot Higgins is determined to make the most of it, telling the summit of his ambition to combine the computing resources of university computer science departments.

Technology that’s developed by computer science departments can be used throughout the entire network rather than it being stuck in one location. Say there’s a protest at a US university and the police start getting violent, which may happen in the next four years, rather than it just being up to those students to figure out what’s happening, the entire network can engage because that process is quite straightforward. And that’s actually something we’ve already done in Utrecht University with the open source Global Justice Investigation Lab. So we went in there, we developed course material, we set up the investigation lab. We did this in partnership with other organizations such as Amnesty and Air Wars and their first major investigation.

So their first major investigation was about police violence that targeted protestors at a pro-Palestinian protest. It showed that there were students who were targeted by excessive force, by the police. It created national media attention. It forced the police to respond, forced the university to respond because they were the ones who called the police in the first place. But this is something we’re currently working on with Birmingham, Nottingham, and Sterling University to expand. We’re hoping in the 25-26 school year to start implementing the first version of this in those universities.

Higgins did not say if there was a countering-disinformation element to his vision of a global university computer science blob. However, the Global Justice Investigation Lab that he referenced as an example counts the combating of “misinformation and fake news” as core to its mission.

Previously, university “disinformation research centers” have occasionally joined forces. The most notable example was as 2020’s Election Integrity Partnership, which included the Stanford Internet Observatory and the University of Washington’s Center for an Informed Public. Presenting themselves to the public as “researchers,” this coalition built switchboarding systems to help state actors fast-track censorship requests to major tech companies.

The notion of community organizing from the top down seems to interest Higgins. In a later video address released by the Cambridge Disinformation Summit, Higgins discusses the concept of “counterpublics,” an academic term that originated in critical theory to describe political discourse that is dissident – oppositional, outside the mainstream.

Higgins draws a distinction between the “healthy” counterpublics associated with left-wing progressive activism like civil rights, and the “disordered” dissident counterpublics of the modern era.

We then come to, I think for me the key period is, we go through the 60s and 70s with the civil rights movement, the formation of these counterpublics. And for me, counterpublics form an important function in democracy when they operate within those verification, liberation, and accountability ideals. If they don’t, they turn into disordered counterpublics, which is what we have now.

He then goes on to say that “healthy” counterpublics ought to be funded by the taxpayer – effectively, regime-approved dissidents.

A lot of the services that were paid through taxes, the communities that were necessary for the formation of these healthy counterpublics kind of just disappeared. Because there wasn’t the money available for them. There wasn’t a good market need for having community centers.

There was also some recognition from Higgins of the censorship industry’s failures. The Bellingcat CEO pointed to “fact checking” as an example of a failed approach, criticizing it for its entirely responsive nature. “Fact-checking websites don’t actually engage in discourse and we have to create discourse,” said Higgins.

The Censorship Legacy of DHS

Nina Jankowicz, who held a brief and controversial position as disinformation “czar” at the Department for Homeland Security under the Biden Administration, claimed in recent comments that despite the many different agencies and sub-agencies of DHS, “all of them had different portfolios that dealt with disinformation.”

Jankowicz said her job as head of the short-lived Disinformation Governance Board was to “herd” the different counter-disinformation capacities of DHS, which she described as a “Frankenstein of a government agency.”

In 2022, after the better part of a decade studying Russian disinformation, I was tapped by the Biden administration to lead a coordination effort within the Department of Homeland Security called the Disinformation Governance Board. This was not a name I came up with — bad name. And the idea was that DHS, for those of you that don’t know, is kind of a Frankenstein of a government agency. Created after 9/11, includes everything from the folks who deal with our cybersecurity, to the folks who deal with natural disasters and the border and transportation, security, all of these things. Also, the Coast Guard for some reason. And all of them had different portfolios that dealt with disinformation. And when the administration came in, the Under Secretary, Rob Silvers kind of looked around and said, there’s not a lot of policy guiding what all of these disparate parts of DHS are doing.

FFO has previously reported on DHS’s extensive counter-disinformation activities, particularly during the 2020 election.

Jankowicz complained that critics characterized her Disinformation Governance Board as a “Ministry of Truth,” going on to argue that online censorship in the US is not “actually real,” unlike censorship in Russia and Belarus.

And folks on the far right as well as some on the far left as well, started calling the effort a Ministry of Truth. Now, let me be unequivocally clear, I had no power to police the internet. If that were my job, I wouldn’t have taken it. I am the granddaughter of somebody who was in a Russian gulag. I have stood up for Russian dissidents and Belarussian dissidents and folks in areas where censorship is actually real.

And these wild claims started going around the internet very quickly after the announcement. And what Debbie just said was so resonant for me. Behavior, bad behavior that is not punished is accepted. Right? Or you said it more eloquently than that. But essentially that’s what happened. DHS was like deer in headlights thought that if they did not address the rumors, these lies, that they would just go away. Like, oh, it’s just the Internet. It’s just people like Jack Poso talking about it. And Tucker Carlson going on a tirade about me. He called me a highly self-confident young woman, which he meant as an insult.

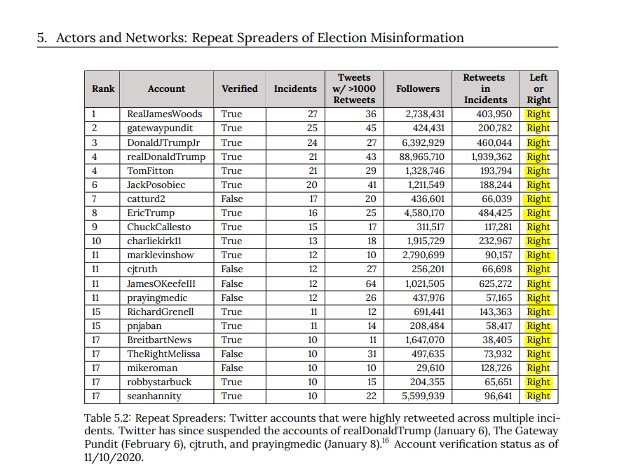

One of the commentators who Jankowicz singled out in her remarks, Jack Posobiec, has been targeted by DHS censorship efforts before. In 2020, he was placed on a list of seventeen Twitter accounts (all Trump supporters) described as “repeat spreaders of election misinformation” by the Election Integrity Partnership, the censorship coalition established by DHS to control online speech during that election.

Source – Election Integrity Partnership

Before she took on her role at DHS, Jankowicz was a writer and commentator with left-leaning views, and had widely promoted the false claim that then-candidate Donald Trump had colluded with the Russian government to win the 2016 presidential election. She had also suggested that the Hunter Biden laptop story was part of a Russian disinformation campaign.

More recently, the former U.S. government official condemned the current U.S. government as an “autocracy,” encouraging her audience of European Union officials to “stand firm” against it.

Despite her defense of her position and the Disinformation Governance Board, many lawmakers had grave concerns about the scope of the board, noting there were no known “boundaries” on what it did, in the words of Sen. James Lankford (R-OK). An investigation by Sens. Chuck Grassley (R-IA) and Josh Hawley (R-MO) suggested that DHS sought a “coordinating role” in online censorship. A 2022 DHS memo confirmed that the agency’s goal was to develop a “unified strategy” for censoring disinformation, using its cybersecurity capacity as a justification.

The sprawling nature of DHS’s counter-disinformation capacities has been highlighted by FFO before. A recent FFO report revealed that FEMA, the disaster relief agency operated under DHS, was used as a conduit to fund online censorship. FFO’s earliest research uncovered the scale of censorship at the Cybersecurity and Infrastructure Security Agency, which created the the Election Integrity Partnership, the now well-known 2020 election censorship coalition exposed by FFO in 2022.

The Cambridge Disinformation Summit provides a glimpse into the future of online censorship. It shows the censorship industry taking stock of its reduced influence in Washington DC and Silicon Valley, and its attempts to adapt to recent changes.